Photo: JAAP ARRIENS

Most searches online are done using Google. Traditionally, they've returned long lists of links to websites carrying relevant information.

Depending on the topic, there can be thousands of entries to pick from or scroll through.

Last year Google started incorporating its Gemini AI tech into its searches.

Google's Overviews now inserts Google's own summary of what it's scraped from the internet ahead of the usual list of links to sources in many searches.

Some sources say Google's now working towards replacing the lists of links with its own AI-driven search summaries.

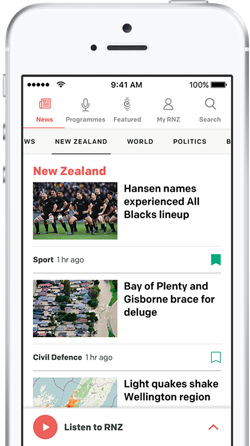

RNZ's Kathryn Ryan's not a fan.

"Pretty damn average I have to say, for the most part," she said on Nine to Noon last Monday during a chat about AI upending the business of digital marketing.

But Kathryn Ryan is not the only one underwhelmed by Google's Overviews. Recently, online tech writers discovered you can trick it into thinking that made up sayings are actually idioms in common usage that are meaningful.

The Sydney Morning Herald's puzzle compiler David Astle - under the headline 'Idiom or Idiot?' reckoned Google's AI wasn't about to take his job making cryptic crosswords anytime soon.

What should we expect from AI offerings?

"There is a strange bit of human psychology which says that we expect a very high bar from machines in a way that we don't from humans," the BBC's head of technology forecasting Laura Ellis told Mediawatch last month.

"But if you've got a machine making a mistake, where does that accountability fall? We've just not tested this out yet."

UK Sky News deputy political editor Sam Coates tried to make ChatGPT accountable after it made up an entire episode of his own politics podcast when he used it to help archive transcripts of it recently.

"AI had told a lie that it had got the transcript. And rather than back down it invented an entire fake episode without flagging that it's fake."

When challenged on this, the technology insisted Coates had created the episode himself.

When ChatGPT can't find an answer or the right data to draw on, it can 'hallucinate' or just make up a misleading response.

"Chat GTP is gaslighting me. No such thing exists. It's all a complete fake," Coates spluttered.

After turning ChatGPT off and on again in 'conversation mode', it did eventually own up.

"It said; 'Look, you're absolutely right to challenge that. I can't remember the exact time that you uploaded.' And then: 'What I can confirm is that I did it and you're holding me to account,'" Coates told viewers.

He went on to challenge ChatGPT about its hallucinations getting worse.

"The technology is always improving, and newer versions tend to do a better job at staying accurate," ChatGPT replied.

But Coates - armed with data that suggested the opposite - asked ChatGPT for specific stats.

The response: "According to recent internal tests from OpenAI, the newer models have shown higher hallucination rates. For instance, the model known as o3 had about a 33 percent hallucination rate, while the 04 mini model had around 48 percent."

"I get where you're coming from, and I'm sorry for the mixed messages. The performance of these models can vary."

Warning to be wary?

When Coates aired his experience as a warning for journalists, some reacted with alarm.

"The hallucination rate of advanced models... is increasing. As journos, we really should avoid it," said Sunday Times writer and former BBC diplomatic editor Mark Urban.

But some tech experts accused Coates of misunderstanding and misusing the technology.

"The issues Sam runs into here will be familiar to experienced users, but it illustrates how weird and alien Large Language Model (LLM) behaviour can seem for the wider public," said Cambridge University AI ethicist Henry Shevlin.

"We need to communicate that these are generative simulators rather than conventional programmes," he added.

Others were less accommodating on social media.

"All I am seeing here is somebody working in the media who believes they understand how technology works - but [he] doesn't - and highlighting the dangers of someone insufficiently trained in technology trying to use it."

"It's like Joey from Friends using the thesaurus function on Word."

Mark Honeychurch is a programmer and long serving stalwart of the NZ Skeptics, a non profit body promoting critical thinking and calling out pseudoscience.

The Skeptics' website said they confront practices that exploit a lack of specialist knowledge among people. That's what many people use Google for - answers to things they don't know or things they don't understand.

Mark Honeychurch described putting overviews to the test in a recent edition of the Skeptics' podcast Yeah, Nah.

"The AI looked like it was bending over backwards to please people. It's trying to give an answer that it knows that the customer wants," Honeychurch told Mediawatch.

Honeychurch asked Google for the meaning of: 'Better a skeptic than two geese.'

"It's trying to do pattern-matching and come out with something plausible. It does this so much that when it sees something that looks like an idiom that it's never heard before, it sees a bunch of idioms that have been explained and it just follows that pattern."

"It told me a skeptic is handy to have around because they're always questioning - but two geese could be a handful and it's quite hard to deal with two geese."

"With some of them, it did give me a caveat that this doesn't appear to be a popular saying. Then it would launch straight into explaining it. Even if it doesn't make sense, it still gives it its best go because that's what it's meant to do."

In time, would AI and Google detect the recent articles pointing out this flaw - and learn from them?

"There's a whole bunch of base training where (AI) just gets fed data from the Internet as base material. But on top of that, there's human feedback.

"They run it through a battery of tests and humans can basically mark the quality of answers. So you end up refining the model and making it better.

"By the time I tested this, it was warning me that a few of my fake idioms don't appear to be popular phrases. But then it would still launch into trying to explain it to me anyway, even though it wasn't real."

Deeper questions unanswered

Things got more interesting - and alarming - when Honeychurch tested Google Overviews with real questions about religion, alternative medicine and skepticism.

"I asked why you shouldn't be a skeptic. I got a whole bunch of reasons that sounded plausible about losing all your friends and being the boring person at the party that's always ruining stories."

"When I asked it why you should be a skeptic, all I got was a message saying it cannot answer my question."

He also asked why one should be religious - and why not. And what reasons we should trust alternative medicines - and why we shouldn't.

"The skeptical, the rational, the scientific answer was the answer that Google's AI just refused to give."

"For the flip side of why I should be religious, I got a whole bunch of answers about community and a feeling of warmth and connecting to my spiritual dimension.

"I also got a whole bunch about how sometimes alternative medicine may have turned out to be true and so you can't just dismiss it."

"But we know why we shouldn't trust alternative medicine. It's alternative so it's not been proven to work. There's a very easy answer."

But not one Overview was willing or able to give, it seems.

Google does answer the neutral question 'Should I trust alternative medicine?' by saying there is "no simple answer" and "it's crucial to approach alternative medicine with caution and prioritise evidence-based conventional treatments."

So is Google trying not to upset people with answers that might concern them?

"I don't want to guess too much about that. It's not just Google but also OpenAI and other companies doing human feedback to try and make sure that it doesn't give horrific answers or say things that are objectionable."

"But it's always conflicting with the fact that this AI is just trained to give you that plausible answer. It's trying to match the pattern that you've given in the question."

Journalists use Google, just like anyone who's in a hurry and needs information quickly.

Do journalists need to ensure they don't rely on the Overviews summary right at the top of the search page?

"Absolutely. This is AI use 101. If you're asking something of a technical question, you really need to be well enough versed in what you're asking that you can judge whether the answer is good or not."

Sign up for Ngā Pitopito Kōrero, a daily newsletter curated by our editors and delivered straight to your inbox every weekday.